Cost of AWS compute

In this article I am going to try to explain how much compute cost at AWS. I will compare three options: EC2, Fargate and Lambda.

Here are some constraints which we will use for the simplicty:

- I am not considering any storage related costs or anything else - I focus only on compute cost

- for simplicity I am not considering any EC2 autoscaling mechanisms

- I have selected EC2 instance from

tfamily which is not recommended for production workloads only because it has small instance which fits our calculations - we are considering only ARM architecture as it is cheaper and more efficient option

- all the prices used in the calculations are for Frankfurt eg.

eu-central-1region - we are not considering any reserved instance nor savings plans pricing

- we assume average month has 720 hours

Consider following real world scenario of a web application:

- reporting system used for quarterly reporting

- approximatelly 1000 users are connecting over the period of 2 weeks each quarter to submit their reports

- the reports are being processed automatically and reviewed manually by small group of admin users

- most of the year there is no real traffic except few admin users

- users are based in single region eg. they connect mostly between 9am and 5pm

- there is almost no traffic at weekends or holidays

- there are some aggregation tasks running periodically every hour

In the next section I will try to calculate what would be the cost of compute when we want to host application with above specification.

Naive approach

In this section we are not going to talk about scaling or estimating how much compute we need. We just simply compute some baseline prices of compute for each of the compute options.

In order to be able to compare these fundamentally different compute options I have decided to try to calculate how much cost 1 minute when using 2 vCPU and 2GiB.

| compute type | vCPU/GiB | 1 minute cost | 1 hour cost | 1 month cost | cost multiplier |

|---|---|---|---|---|---|

| EC2 | 2/2 | $0,00032 | $0,0192 | $13,824 | x1 |

| Fargate (fake) | 2/2 | $0,001378 | $0,08268 | $59,5296 | x4,3 |

| Fargate (real) | 2/4 | $0,0015143 | $0,09086 | $65,41776 | x4,7 |

| Lambda | 2/2 | $0,001602 | $0,09612 | $69,2064 | x5 |

How have I calcualted the cost (for nerds and nonbelievers)

I have calculated different price points for each service. Usually you already get 1 hour cost, I have calculated 1 minute so it is closer to real world traffic. I've also calculated 1 month cost because it is very hard for people to compare small numbers - when we work in tens instead of fractions everyone can imagine things clearly.

EC2

For t4g.small instance type which is 2 vCPU and 2 GiB the hourly rate in eu-central-1 region is $0.0192.

In order to calculate 60 seconds we just divide $0.0192 by 60 which gives us $0.00032.

We have hourly rate so we can easily calculate monthly rate by multiplying $0.0192 by 720 hours in months which gives us $13.824.

Fargate

When selecting Fargate task size you are unable to select vCPU/GiB in ratio 1:1. There is only option to select ratio 1:2. In theory since the pricing page contains price per vCPU and per GiB we can calculate combination of 2 vCPU and 2 GiB but in real life you are unable to select such combination. Hence we calculate two pricing points - one for fake (theoratical) Fargate task with 2 vCPU and 2 GiB and one for real Fargate task with 2 vCPU and 4 GiB.

Price per vCPU per hour in eu-central-1 for ARM architecture is $0.03725, price per GiB per hour in eu-central-1 is $0.00409.

Hour of fake Farkage task cost is $0.03725 * 2 + $0.00409 * 2 = $0,08268. Hour of real Fargate task cost is then $0.03725 * 2 + $0.00409 * 4 = $0,09086.

Calculating minute is easy, we just need to divide hourly rate by 60 - fake $0,08268 / 60 = $0,001378; real $0,09086 / 60 = ~0,0015143.

For monthly rate we again multipy individual hourly rates by 720 hours - fake $0,08268 * 720 = 59,5296; real $0,09086 * 720 = 65,4192.

Lambda

Lambda works differently. You can only choose the memory size and the vCPU is allocated proportionally in steps as explained in this article. For 2 GiB Lambda we should be allocated with 2 vCPU.

Price per 1ms for 2 GiB Lambda in eu-central-1 with ARM architecture is $0.0000000267 - you can checkout the pricing page if you don't believe me.

In here we ignore all other costs associated with Lambda eg. requests.

Price for minute of running is calculated as $0.0000000267 * 1000 * 60 = $0.001602.

Price for hour is calculated as $0.001602 * 60 = $0,09612.

Price for month is calculated as $0,09612 * 720 = $69,2064.

As you can see from the above table it seems that using EC2 for running steady load would be approximatelly 4 times cheaper than Fargate and even 5 times cheaper than using Lambda for the same steady load.

Claiming this would be unfortunatelly extremly naive. Running service on EC2 or Fargate might be very similar but running service with AWS Lambda is entirely different. Let's discuss this in the next section.

Back to the future Earth

When you run standard web application on EC2 you probably need to run some proxy in front of your workers. Usually this is httpd or nginx which then invokes worker like PHP. If you are all in on JavaScript then you might even run some Node.js server like express, koa or even hono.

Within this approach the resources of the server namely 2 vCPU and 2 GiB are being shared between your server, operating system and other services installed on your instance. When request hits your EC2 the application web server allocates chunk of memory and runs code which handles the request until the response is returned. Then the memory is usually freed an ready for handling another request. When many requests hits the server and all the resources are exhausted there still might be implemented some buffering in your server which causes the requests to be handled slower with larger latency but they are being eventually handled. If your traffic spikes that's OK because your service is still available it is just a bit slower. When your service is under heavy load for longer period of times depending on quality of the application web server your service might even go down resulting in service disruption. This is of course when we don't talk about any means of auto-scaling, queueing or caching. We are talking about single 2 vCPU/2 GiB box.

Fargate works similarly (when you omit the autoscaling) - the main difference form EC2 is that when using Fargate you are not managing the underlying operating system eg. you just provide Docker image and Fargate is running it on selected vCPU/GiB configuration for you. You don't need to patch your servers, you don't need to handle hardware deprecation or anything else. AWS rightiously put price on this which results in 4.3-4.7x larger price than EC2 where you are responsible for all of this yourself. Besides this Fargate server is used very similarly than EC2 eg. you are still running web application server within the container which allocates chunks of memory to individual requests.

The above text is written for illustration purposes and it is not meant to describe how exactly things runs on EC2 or Fargate. In real life there will be other components like load balancers, target groups and auto-scaling groups involved. I have simplified this on purpose for clarity.

With Lambda this is very different. Lambda is not supposed to run any application web server.1 Lambda is supposed to handle single request (mostly HTTP request in our scenario), return response and then die. When your users generates multiple requests the Lambda service will spawn multiple Lambda functinos. Here the 2 vCPU and 2 GiB is not shared with application web server nor with the other inflight requests. Each inflight request is allocated with it's own instance of the Lambda function. Concurrently. You can still run the entire web server within Lambda function for instance with Docker but it would be pure overhead as Lambda would still only handle single request. If you want to use Lambda properly you will need to study its architecture and most likely change your mental model.2

What "concurrently" in this case means?

Lambda can scale up to 1000 concurrent invocations per second per AWS account instantly. These invocations are shared between all services which invokes Lambda in your account eg. when you have some asynchronous task which uses 10 concurrent invocations your web traffic can only use 990 concurrent invocations assuming there are no other services in the account which are using additional concurrent invocations. Lambda will scale beyond 1000 concurrent invocations but in slower rate.

The real lesson here though is that 1000 users is not equal to 1000 concurrent invocations.

It is very hard to calculate how many users can make 1000 concurrent invocations as it varies from application to application and it is dependent on variable dimmensions. For instance:

- how much time users spend on your site

- how is the website architected - for SPA most of the interaction is done client side and then only from time to time client does request to backend

- how many users are browsing the site at the same moment

- proximity of particular users to the service and latency associated with it

In other words in order for Lambda to invoke functions concurrently there must be multiple users of the website which hits the Lambda service at the same time meaning multiple requests are inflight at the same time. This of course can happen but the chances for 1000 users using web application for reporting are slim - eg. I would expect concurrency in 10s not 100s or 1000s.

To be perfectly clear there might be situations where 1000 users can produce 1000 concurrent invocations - for instance when there is some event where users are motivated to "click" somewhere at the same time like tickets sale. Here the users are clicking/refreshing the site for the period of few minutes rapidly. Lambda should still sustain it though even if there might be some degradation of service because of slower scaling.

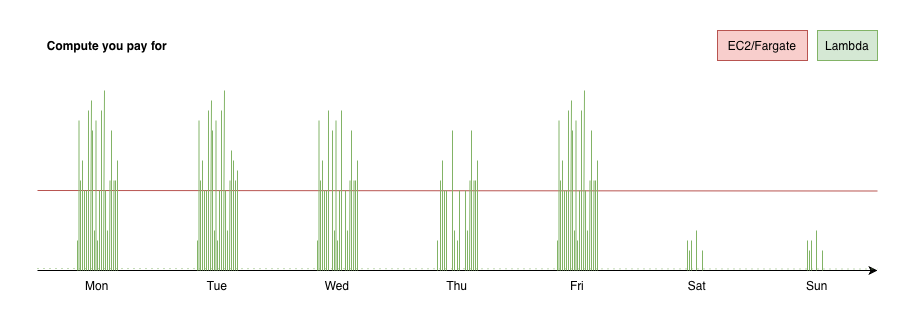

In order to see more real life, less naive approach, I've prepared following figure which depicts how the traffic could look like for our reporting application.

In above figure I've tried to depict the compute which you actually pay when using EC2/Fargate and Lambda. This figure is based on traffic pattern described in the beginning of this article. Here you can see that for EC2 or Fargate (red line3) you are paying 24/7 no matter what traffic you get. For Lambda (green spikes) you would only pay for the time Lambda actually runs eg. is invoked by users on your site. I have also included dashed green line in the bottom which accounts for periodic aggregation task.

Of course this assumes that you've built your application in modern terms meaning it is most likely SPA (Single-page Application) JavaScript frontend which is served from S3 bucket behind CloudFront distribution and Lambda is being used only for API communication. You can do similar architecture with EC2/Fargate but you will pay for those even if the API is not being hit. If you don't like the idea of SPA because you are worried about search engine ranking then I can recommend you to use one of the modern JavaScript frameworks like SvelteKit or Remix/ReactRouter 7 which use progressive enhancements where you get server-side rendered HTML page on first load and then when user interact with the site it behaves as SPA. If you want to start with SvelteKit on Lambda I encourage you to try my kit-on-lambda library.

The pattern shown in the figure only shows you single week of traffic. When taking whole year into account we would end up with 2 weeks of similar traffic per quarter when the site is active for reporting and then the usage would rapidly drop in between. For EC2/Fargate you would still need to pay for entire time of the year - same horly price.

Conclusion

Using Lambda for hosting web applications is great choice. Especially when you are not sure about your traffic patterns or when the traffic is not very steady. By architecting with AWS Lambda in mind you can achieve very cost efficient solutions which are highly available and scalable.

Using EC2/Fargate is great for steady workload - eg. when you process stream of data and you can utilize whole time EC2/Fargate is running. In this case you can even save money with Reserved Instances for EC2 or Savings plans for all EC2, Fargate and even Lambda.

You shouldn't be scared to architect your applications around Lambda, especially now when Lambda Managed Instances (LMI) have been introduced last year. This means that you can stick to the Lambda mindset but when you realize your workload is not unpredicable anymore you can switch to LMI in order to save on compute.

You can achieve the same architecture as Fargate or Lambda using EC2. It's true because both Fargate and Lambda are running on EC2 - yes, even if those are serverless compute options, they are running on fleet of EC2 instances managed by AWS. This is the premium you pay for. This is why Fargate and Lambda are 4-5x more expensive than EC2. As I said you can set it up yourself or you can let AWS "manage your cluster" and innovate along the way whilst you focus on your product and your customers.